Home » Learning Curve

On File Management (9)Part nine. Apple's Achilles heel.

Long before he became known for his MacBook hack, IBM security researcher David Maynor had a computer security epiphany.

Maynor gave a series of lectures where he talked about computers within computers. His MacBook hack coincidentally understood the concept. Peripheral devices run by drivers running in privileged mode were susceptible - and if compromised, could bring down an entire system. The same holds for more internal functions such as DMA.

Maynor also harped on the complexity of modern personal computer hardware and how each hardware and software component must act as if it's a computer unto itself and be able to protect itself accordingly.

A computer file system is such a component. And although most operating systems have adequately designed file system components, Apple's do not.

Apple have had myriad scandals over the years related to their file systems - hosed user systems, massive data loss, and so forth. No other vendor need admit to having such an embarrassing record. Clearly something is very wrong.

Let's start at the very beginning - the very 'bottom' of things.

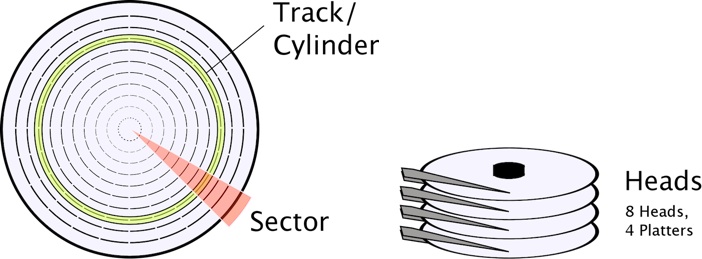

Head/Cylinder/Sector

Hard drives are analog devices. They grow cheaper per storage unit at a geometric rate. They have moving parts. But they're not yet obsolete. A binary digit does not have a corresponding unit on a hard drive. Precisely how hard drives store (and read back) data isn't important - what's important is that they work and are documented to work.

Apple do a lot of testing on their hard drives before the computers leave the factory. The phrase '100,000 butterfly reads and writes' has been quoted. Wintel OEMs are happy if they can get a Microsoft welcome screen - then they pack the junk away for shipment. An Apple hard drive that fails the butterfly test gets sent back.

Hard drive storage is specified in terms of head/cylinder/sector. Hard drives are stacks of (virtual) platters accessible on both sides. The hard drive controller can read or write from multiple 'heads' at any one time within the same cylinder.

A sector is a chunk of a single revolution ('track') on a hard drive off a specific head in a specific cylinder. A cylinder contains all the tracks on all heads at specific offset. Like a CD except the tracks are concentric and never meet.

Heads are zero-indexed, cylinders as well, but sectors are one-indexed. FWIW.

Actual reads and writes to a hard drive are specified in terms of a succession of specifications for head/cylinder/sector. Sectors today are almost always 512 bytes.

Operating system input/output units are almost always 4096 bytes - 8 sectors. This is to say the OS file system doesn't want to bother with data quantities less than that. A file of one byte consumes 4 KB of disk space.

The file system communicates with the device driver for the hard drive so everything gets read or written.

Each of the above listed levels of code has to be tested meticulously. There's a reason driver code is written in a more formalised fashion. There's a reason driver coders are more meticulous. Their code is supporting the whole house of cards.

The slightest error and everything falls apart.

Each of the above listed levels of code has to be tested independently. There's no point in building and testing a higher level if the underlying code still isn't reliable. And so forth.

And writing code this crucial takes time - considerably more time than scrapping together a user land application. And the testing process isn't trivial either: it has to be rigourous - very rigourous.

The hallmark for any component in here is that it must be self-protecting. Almost like an Asimov law of robotics. The component must never accept and perform commands that corrupt it or destroy another component in the computer.

This means that each component has to have an armada of sanity checks - code that makes sure the requests are legitimate, the parameters are 'in bounds', and so forth. Nothing can be left to the providence of those acting at a higher level.

Apple have a very bad reputation in the industry for this type of coding.

- A very early iteration of iTunes hosed user drives. The install script wasn't rigourously tested.

- The first ever release of Safari hosed user drives caused by a lack of rigour in coding at several levels.

- The infamous 'massive data loss' bug was an inexcusable design error that caused widespread destruction.

And so forth.

The File System Component

File systems have to protect themselves. Their first priority has to be their own self-preservation. This care and caution has to extend all the way up to user interaction level. No code snippet and no user choice can be allowed to destroy the file system or a part thereof. Their second priority is to protect the user.

The file system component must first and foremost see that no operation corrupts the file system and thereafter that users don't shoot themselves in the foot.

Apple documentation for Carbon file management APIs in early iterations of OS X was riddled with warnings to developers to not use the APIs under certain conditions as user systems could be hosed.

A file system that relies on the goodwill of developers and users for self-preservation is not a file system worth preserving. The protective code's supposed to be built into the file system component itself.

Things don't work like that on Apple systems.

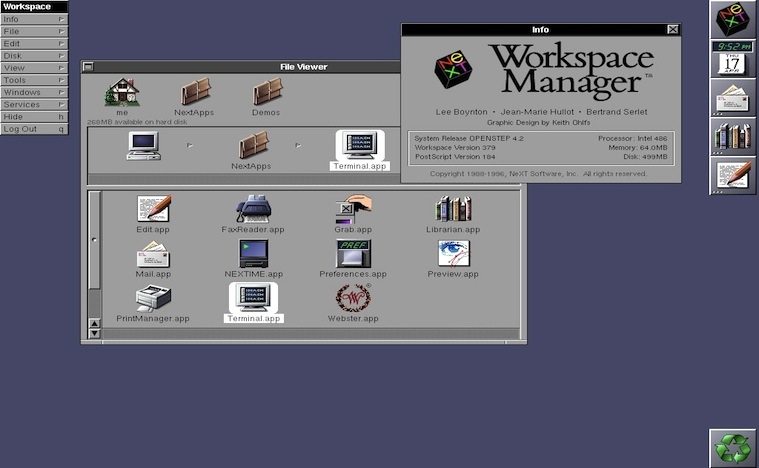

The architects of OpenStep found a good way to divide the labour. OpenStep - and Cocoa today - have essentially two aggregates of code classes.

- Foundation framework. This is abstract code with no user interaction.

- AppKit framework. This is code that will be seen by the computer user.

The AppKit code classes use Foundation classes when performing their duties.

A good example of how this works (or should work or once upon a time worked) can be seen in OpenStep/Cocoa file system management. The basic code class - in the Foundation framework - is NSFileManager. This class contains all the intrinsics for file management on NeXT/Apple systems. The user never interacts with it and it never generates visual interface components.

The 'higher level' class that does interact with the user is known as NSWorkspace. NSWorkspace is therefore a part of the AppKit framework. NSWorkspace had a visual interface on OpenStep but doesn't have one any longer - it's been abducted by Finder.

Yet the following string dump clearly shows where NSWorkspace resides to this day - in the 'visual' AppKit framework.

NSWorkspaceDidLaunchApplicationNotification

NSWorkspaceDidMountNotification

NSWorkspaceDidTerminateApplicationNotification

NSWorkspaceDidUnmountNotification

NSWorkspaceDidWakeNotification

NSWorkspaceSessionDidBecomeActiveNotification

NSWorkspaceSessionDidResignActiveNotification

NSWorkspaceWillLaunchApplicationNotification

NSWorkspaceWillPowerOffNotification

NSWorkspaceWillSleepNotification

NSWorkspaceWillUnmountNotification

NSWorkspaceDidPerformFileOperationNotification

NSWorkspace

NSWorkspaceCenterDeviceObservers

NSWorkspaceCenterApplicationObservers

NSWorkspaceCenterPowerObservers

NSWorkspaceCenterSessionObservers

NSWorkspaceCenter

NSWorkspaceStatic

NSWorkspaceObserver

/SourceCache/AppKit/AppKit-949.54/AppKit.subproj/NSWorkspace.m

Why is NSWorkspace in a framework for visual (user interaction) code classes? Because it used to be visual.

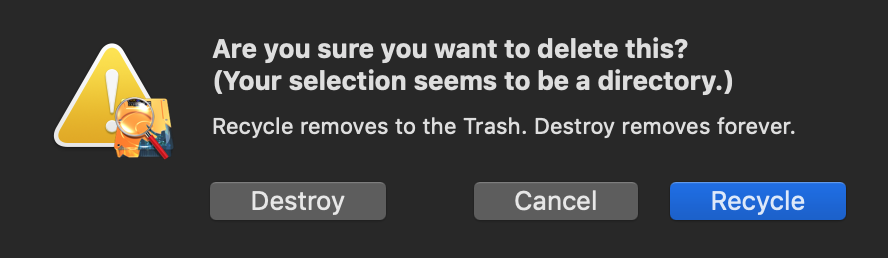

Why have a 'visual' file management component? That's obvious: so the component can exercise the important 'forgiveness principle' (see IBM SAA/CUA) when needed. In street corner parlance: the file system must be able to issue repeated 'are you sure' alerts to the user when necessary.

Apple's version of what's left of OpenStep code no longer does that. The GNUstep code does but not Apple's.

GNUstep's code is riddled with sanity checks and user alerts at redundant levels. Bad things don't happen with GNUstep code and GNUstep use. GNUstep code is based on OpenStep - what Cocoa used to be.

Merge versus Replace

Every file system in the world save Apple's and those derived from Apple's use a file operation concept known today by a term that needed to be invented to accommodate Apple's strange way of doing things.

Give the coders at Panic credit for recognising this in their FTP client - they have to as every file system outside Cupertino uses 'merge' instead of Apple's 'replace'.

What's this all about? And what's the difference between 'merge' and 'replace'? Even the Unix command line tools on OS X use 'merge'. Why are people at Apple being so different - so 'difficult'?

One thing's certain: it's not in the user's best interests.

Apple file APIs are written to fail when a destination already exists. No one else's are - only Apple's.

Take an easily understandable example. Create two file hierarchies of your own. Put them under ~/Documents to make things simple. Don't overdo it - do enough so you clearly see what's going on. Compare the way Unix command line tools work with the way Apple ideas 'work'.

We need two hierarchies. We're going to 'copy' and 'move' between them. First with the command line Unix tools and then with Apple's own 'visual' utilities.

Test1:

~/Documents/Test1

~/Documents/Test1/Movies

Movies1.txt

~/Documents/Test1/Music

Music1.txt

~/Documents/Test1/Pictures

Pictures1.txt

Test2:

~/Documents/Test2

~/Documents/Test2/Movies

Movies2.txt

~/Documents/Test2/Music

Music2.txt

~/Documents/Test2/Pictures

Pictures2.txt

- ~/Documents has two subdirectories - Test1 and Test2.

- Test1 and Test2 have identical subdirectories - Movies, Music, Pictures.

- The subdirectories of Test1 and Test2 have different files - they have no files in common.

Try to guess what will happen before you try it. From the command line:

- 'cp -R ~/Documents/Test2/* ~/Documents/Test1' will result in all files being found in ~/Documents/Test1.

From 'TFF':

- Dragging Movies, Music, and Pictures inside ~/Documents/Test2 to ~/Documents/Test1 will destroy the files previously inside ~/Documents/Test1. Copy and move both. (Actually the three directories are also destroyed and created again.)

Standard 'non-Apple' copy and move operations don't destroy things on the way in - an attempt to create a directory that already exists will of course fail; but the code doesn't worry about the return code from such an operation - it only checks to see the directory exists afterwards.

That's 'merge'.

Apple's file management code requires the destination (at the topmost level) to be removed before things can proceed. A user can copy or move files individually and then get expected visual alerts from 'TFF' - but copying and moving 'hierarchies' with a number of hierarchical levels doesn't work the same way. The topmost target must always be removed first - whether it be one single file or a directory containing thousands of files.

That's 'replace'.

'Replace' is easier to code - it's a lazy way of doing things - but it's hazardous and it's a bitch down the road for users.

The very fact that destinations must be destroyed before file operations complete is hazardous and forces API callers to themselves perform sanity checks. This can never be good and it goes against fundamental design principles: the file system can't protect itself if it's outsourced its survival to unknown external code it can't control.

David Neil Cutler

David Cutler's operating systems are object-based - they're not object-oriented, they're object-based: each component is sealed. Barring what Microsoft did to his Prism project, Cutler's had an impeccable record.

Trying file operations with a Cutler system like NTx yields a completely different experience. User land code makes a request of the file system (by calling SHFileOperation). All the parameters are sent in. At that point, the file system takes over.

The file system decides what to allow and what to disallow. The file system issues alerts as it sees fit: alerts about mixing file types, about overwriting files, and so forth. The file system retains control and as its second highest priority protects the user after it's protected itself.

That's a stark contrast with the slipshod way Apple have been doing business the past fourteen years.

Real Life Examples

√ Cyclical operations. Cyclical operations are bad enough with a file system that uses 'merge' rather than 'replace'. But with Apple file systems they're deadly. Apple's Finder doesn't protect you - but it gets away with murder, evidently confusing what's really going on and insisting it can't complete the destructive operations because the source or destination files are under the control of 'another process'. But there is no other process and that's got to be the worst worded 'user friendly' diagnostic ever generated.

√ Type mismatches. Apple love to let this one go - over the protests of users everywhere. Third party vendors are forced to build the same protective code into each of their applications because Apple obstinately refuse to admit their shortcomings.

People generally do not want to overwrite hives of perhaps thousands of files with 'myfile.txt' and it doesn't matter that they mistakenly give the paltry file system the go-ahead. These are the types of things a file system is supposed to take care of to protect the users. Apple categorically refuse - that refusal says a lot about how much they care for their users.

Xfile goes a lot farther than simply thwarting disasters such as the above: Xfile doesn't allow any type mismatches at all.

You can't mix any of the standard types: regular files, symbolic links, sockets, whiteouts, named pipes, character devices, directories, block devices. Odds are most people don't even know what most of those are. All the more reason to protect them.

Further Reading

Learning Curve: 4893378 FAQ

Industry Watch: Sanity Checks at Apple

Learning Curve: A Sanity Check for Apple

Learning Curve: 4893378: 'Expected Behaviour'

Developers Workshop: HFS: The Good & The Bad

Learning Curve: Rebel Scum: More Attacks on 'Expected Behaviour'

Learning Curve: Hosing OS X with Apple's Idea of 'Expected Behaviour'

See also the 'Massive Data Loss' section of 'On File Management (4)' linked below.

Enterprises Don't Care

Apple are discontinuing their luckless enterprise products. They never sold well to the enterprise.

Computer science always starts with the military. The Internet started with the military. From there it branches out to universities and then big business.

There's a reason Apple never succeeded in selling to the enterprise. And it's not that they didn't make an effort. Perhaps this article shines a bit of light on the real reason.

See Also

Learning Curve: On File Management (1)

Learning Curve: On File Management (2)

Learning Curve: On File Management (3)

Learning Curve: On File Management (4)

Learning Curve: On File Management (5)

Learning Curve: On File Management (6)

Learning Curve: On File Management (7)

Learning Curve: On File Management (8)

Microsoft TechNet: Chapter 17 - Disk and File System Basics

Developers Workshop: It Wasn't Good Then, It's No Better Now

Linux Administrators Guide: Using Disks and Other Storage Media

About RixstepStockholm/London-based Rixstep are a constellation of programmers and support staff from Radsoft Laboratories who tired of Windows vulnerabilities, Linux driver issues, and cursing x86 hardware all day long. Rixstep have many years of experience behind their efforts, with teaching and consulting credentials from the likes of British Aerospace, General Electric, Lockheed Martin, Lloyds TSB, SAAB Defence Systems, British Broadcasting Corporation, Barclays Bank, IBM, Microsoft, and Sony/Ericsson.

Rixstep and Radsoft products are or have been in use by Sweden's Royal Mail, Sony/Ericsson, the US Department of Defense, the offices of the US Supreme Court, the Government of Western Australia, the German Federal Police, Verizon Wireless, Los Alamos National Laboratory, Microsoft Corporation, the New York Times, Apple Inc, Oxford University, and hundreds of research institutes around the globe. See here.

All Content and Software Copyright © Rixstep. All Rights Reserved.

|